JavaScript (JS) Simulator Low-Code Case Study

Design case-study for testing JS functions for mobile application development

Overview

About Zinier

Zinier is a field service automation software. The company invests in finding solutions to common problems of speed and scale with AI-driven field service management.

Zinier low-code suite of apps

Zinier has a low-code platform built for helping developers and citizen developers develop and deploy solutions at high speed. It consists of a wide choice of prebuilt field service solutions ready to go, a Low-Code platform to self-build applications, and a highly configurable AI engine for transformative predictive service - all in one.

Team Members

Product Manager: Abhijeet Anant Joshi

C-Suite Stakeholders: Gideon Simons

Engineering Stakeholders: Arjun Ramachandra, Ritesh Goyal, Tushar Raj

Product Designer & Researcher: Dhananjay Garg

Problem

Testing JS functions for mobile application development takes away two weeks/project of a developer’s time, including multiple escalations from the QA team. However, integrating platform-level testing tools inside Studio-Z can significantly reduce this time. According to early estimations, this time to test can be reduced from 2 weeks/project to as much as one day if implemented on the Server.

Business Objectives

Decrease the JS-Lib testing time by two weeks/project. It will reduce implementation time and add value to the solution developers. This implementation will also reduce the need to test things outside Studio Z.

Process

During the discovery ideation phase, I did a detailed competitor study, ideated rapidly using paper wireframes, collected as many problems from user interviews, and captured data 📶metrics as possible.

During research interviews, the engineering team got looped into the process and invited to attend all sessions that helped gather dev feedback/insights.

After prototyping, I collaborated with engineers to do a feasibility check on the solution-designed and product managers to understand if they think this can work with the existing development and scope of work/roadmap. Product Managers communicated about the feasibility checks and estimations provided by the engineering teams.

Finale deliverable artifacts consisted of research documentation, wireframes, clickable prototypes, and high-fidelity Figma/Sketch files with slices turned on for exporting.

Research

Constraint: Android & iOS have different JS engines. It leads to functions running differently on other mobile OSes. We need to host the Server's engine to pass JS and send back the response.

Ideal Solution

➡️ Run One JSLib function on multiple engines

➡️ This will simulate the running on different devices

➡️ Modify JS-Lib for it to run successfully on both engines.

Context Info

Rule Engine Support in Zinier: ES5/ES6. Zinier isn’t supporting: ES8

Currently, users have to procure a device physically & run the code.

Easy Solution: Run JS-Lib on Chrome & Safari to emulate similar results.

Front End Libraries: Selenium

We cannot use them because we provide our page context, which is impossible with these.

With libraries, no server support was needed.

But the library won’t understand pageContext—but we needed Page Context for initializing the JSLib function.

For simulating JSLib, we cannot achieve dynamic Mobile Page Builder (MPB) without server support.

Just implementing a frontend solution, the user has to run the code on Safari & Chrome separately, which is the clunky solution since devs have to switch/install browsers login into the same org to simulate the JS-Code.

Either way, the solution (JS-Simulator) needs to be integrated into the Mobile Page Builder.

JS Simulator is not required for Web pages immediately because devs are already simulating JS-Code on the Web using Chrome Web Tools.

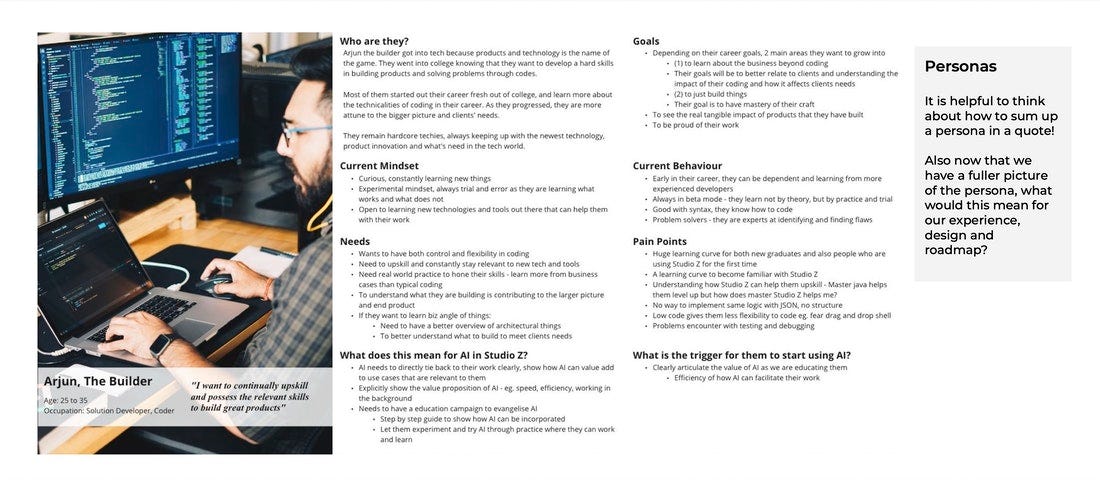

🤹 Target Customer/Personas

Solution Developers

Platform QA

🔎 Requirement Gathering

🕵️♂️ Research Suspects

Technical Architect: Arjun Ramachandra

Solution Development Lead: Dharamjeet Kumar

Senior Solution Developer: Anubhav Sharma

Senior Development Lead: Vipin Mohan

💎 Research Log

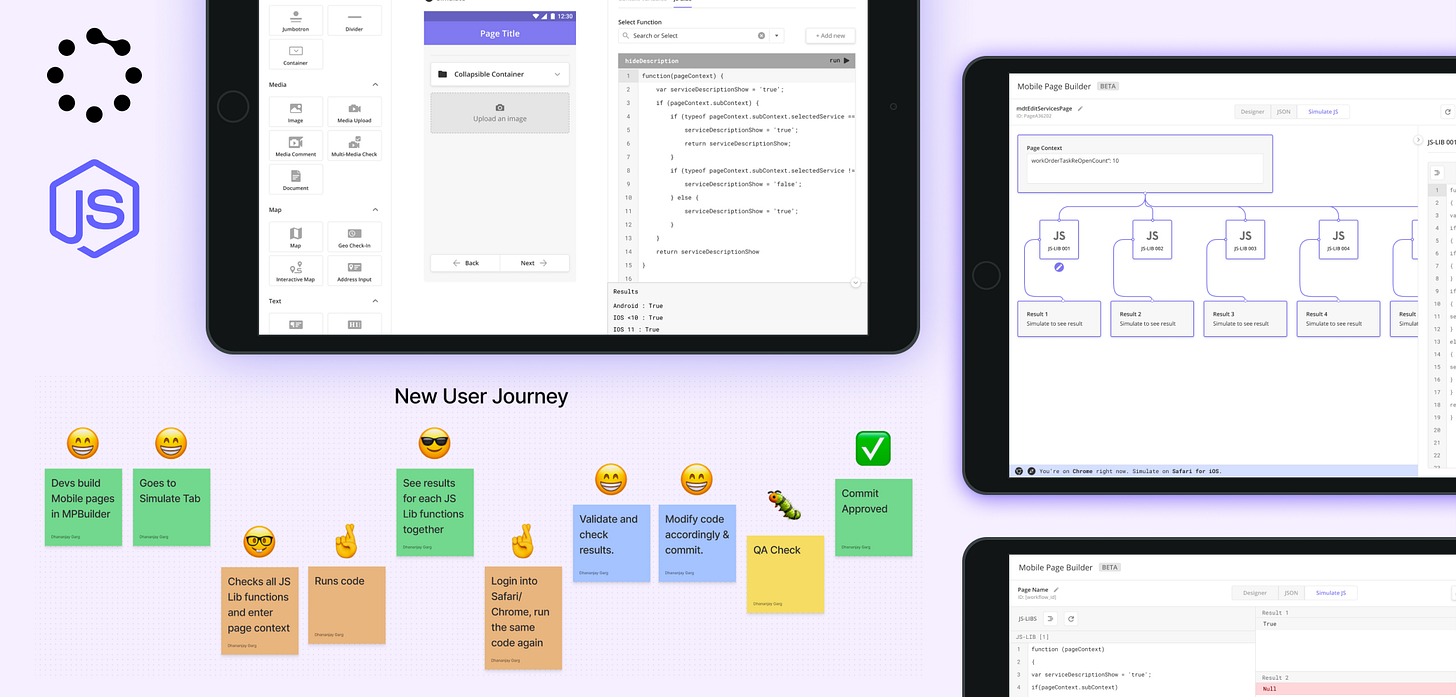

🚗 User Journey Mapping & Outcomes

Current User Journey: Devs writes JS code ➡️ Runs code on node-JS Server ➡️ Validate Code and Commit ➡️ QA Check ➡️ If QA fail then ➡️ Devs try to procure physical device to test code or rely on QA notes ➡️ Test, Validate, Commit ➡️ Repeat process

New Journey: Devs go to MPB ➡️ Goes to Page Simulator ➡️ Selects one or all JS-Lib code (fetch automatically from JSON code) ➡️ Enters Page Context ➡️ Simulate code for all of them ➡️ Show Results for each of them ➡️ Repeat the Process for Chrome/Safari

📍 Prioritization / Roadmap

Once the development is complete, this codebase can be replicated and used across the platform to support JS simulation on the Workflow level and in the Web Page Builder (WPB).

JS Simulator can also be plugged out and accessed as a universal tool outside the MPB when it supports all JS simulator types.

Solution

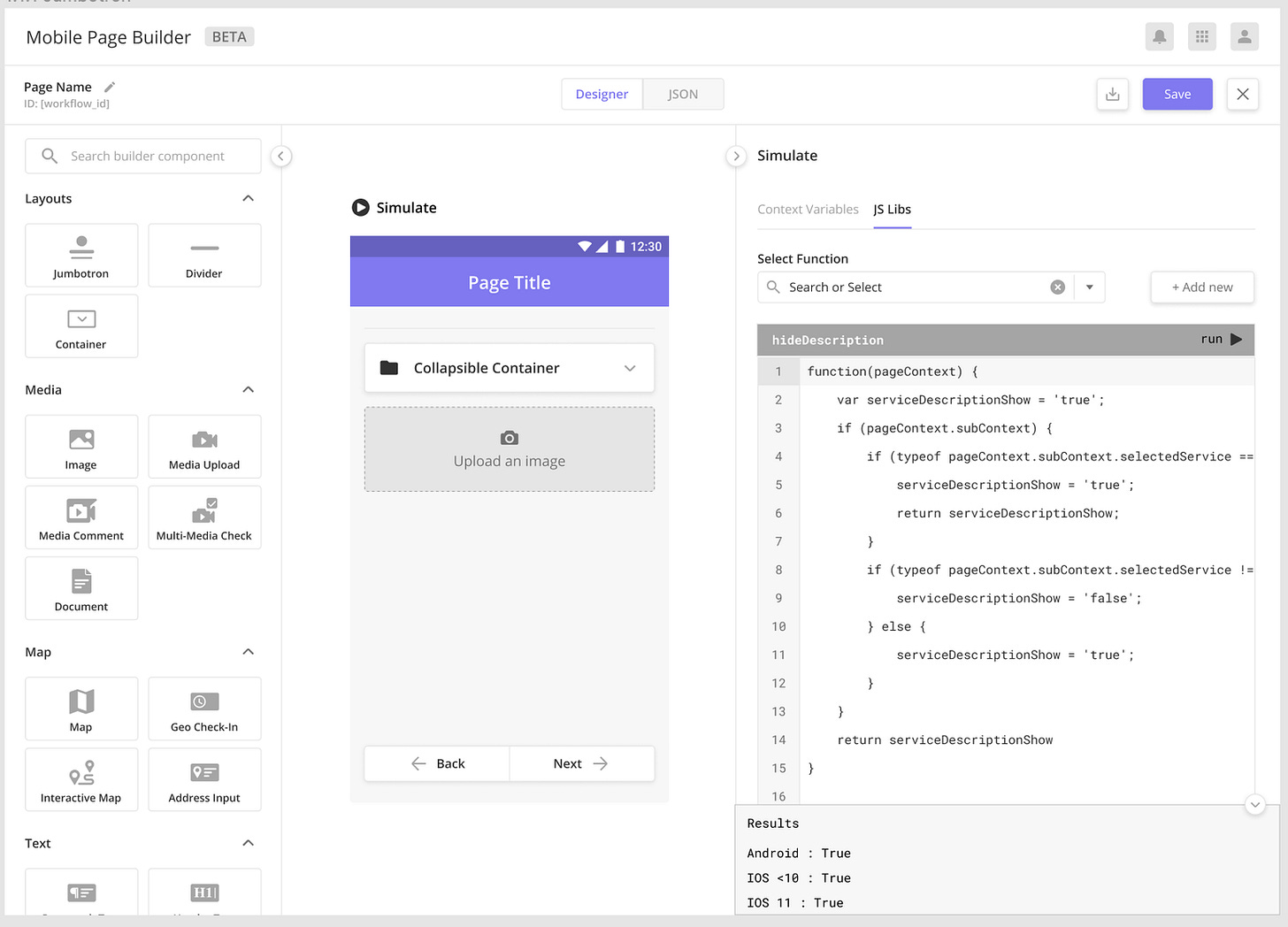

3️⃣ Version 3.0 [Final Implementation]

2️⃣ Version 2.0

1️⃣ Version 1.0

✏️ Feedback Received

JS-LIBS should be editable. And save the edited code into the JSON tab.

Page Context should be on top of JS-LIBS.

The page should get divided into 50:50 – Inputs & Results.

JS-LIBs and Results should be aligned to each other, respectively.

Need more visual distinguishing between sections.

Detect if the user is on Chrome/Safari and instruct the user to switch to a different browser for testing on iOS/Android.

🌊 Flow

Devs go to MPB ➡️ Goes to Page Simulator ➡️ Selects one or all JS-Lib code (fetch automatically from JSON code) ➡️ Enters Page Context ➡️ Simulate code for all of them ➡️ Show Results for each of them ➡️ Repeat the Process for Chrome/Safari

❌ Technical Limitation

Developers need to run the same code in two different windows and two different browsers - Safari & Chrome to use their respective engines to emulate the code validation on iOS & Android.

User Tested Stories

Outcomes & Key Results

Design Outcome: Decreased effort for Mobile Development Testing.

Key Result: Mobile development testing became significantly faster by 1-1.5 weeks/project.Design Outcome: Enhanced developer efficiency.

Key Result: 50% reduction in JS lib/Javascript code debugging time.Design Outcome: Reduced the need to test things outside of Studio Z (qualitative outcome)

Key Result: Solution Developers spent more time developing and testing Mobile Projects inside the Studio Z environment.

Product Specs

Introduced JavaScript simulation capabilities on Studio Z to bring in developer productivity improvement. This feature impacted all Solution Developers working on developing Applications using Studio Z.

RISCE score = 64

Reach = 10

Impact = 4

Strategic alignment = 4

Confidence = 80

Effort = 2

My Role

I contributed to the project as a Product Designer and a UX Researcher. As a result, I got to work with the engineering team member, interview and learn from affected personas, and design wireframes and prototypes that eventually got baked into the product roadmap and platform.